v3.1: WebLLM summarization, improved translations, copy button, removed mini player

Some checks failed

Docker Build & Push / build (push) Has been cancelled

Some checks failed

Docker Build & Push / build (push) Has been cancelled

- Added WebLLM service for client-side AI summarization and translation - Improved summary quality (5 sentences, 600 char limit) - Added Vietnamese character detection for proper language labels - Added Copy button for summary content - Key Points now extract conceptual ideas, not transcript excerpts - Removed mini player (scroll-to-minimize) feature - Fixed main.js null container error - Silent WebLLM loading (no overlay/toasts) - Added transcript service with yt-dlp

This commit is contained in:

parent

6c1f459cd6

commit

f429116ed0

36 changed files with 5321 additions and 1088 deletions

|

|

@ -9,3 +9,5 @@ __pycache__/

|

|||

*.pyd

|

||||

.idea/

|

||||

.vscode/

|

||||

videos/

|

||||

data/

|

||||

|

|

|

|||

1

.gemini/tmp/ytfetcher

Submodule

1

.gemini/tmp/ytfetcher

Submodule

|

|

@ -0,0 +1 @@

|

|||

Subproject commit 246c4c349d97205eb2b51d7d3999ea846f5b2bdc

|

||||

|

|

@ -27,5 +27,7 @@ RUN mkdir -p /app/videos /app/data

|

|||

# Expose port

|

||||

EXPOSE 5000

|

||||

|

||||

# Run with Gunicorn

|

||||

CMD ["gunicorn", "--bind", "0.0.0.0:5000", "--workers", "4", "--threads", "2", "--timeout", "120", "wsgi:app"]

|

||||

# Run with Entrypoint (handles updates)

|

||||

COPY entrypoint.sh /app/entrypoint.sh

|

||||

RUN chmod +x /app/entrypoint.sh

|

||||

CMD ["/app/entrypoint.sh"]

|

||||

|

|

|

|||

140

README.md

140

README.md

|

|

@ -1,110 +1,62 @@

|

|||

# KV-Tube

|

||||

**A Distraction-Free, Privacy-Focused YouTube Client**

|

||||

# KV-Tube v3.0

|

||||

|

||||

> [!NOTE]

|

||||

> Designed for a premium, cinematic viewing experience.

|

||||

> A lightweight, privacy-focused YouTube frontend web application with AI-powered features.

|

||||

|

||||

KV-Tube removes the clutter and noise of modern YouTube, focusing purely on the content you love. It strictly enforces a horizontal-first video policy, aggressively filtering out Shorts and vertical "TikTok-style" content to keep your feed clean and high-quality.

|

||||

KV-Tube removes distractions, tracking, and ads from the YouTube watching experience. It provides a clean interface to search, watch, and discover related content without needing a Google account.

|

||||

|

||||

### 🚀 **Key Features (v2.0)**

|

||||

## 🚀 Key Features (v3)

|

||||

|

||||

* **🚫 Ads-Free & Privacy-First**: Watch without interruptions. No Google account required. All watch history is stored locally on your device (or self-hosted DB).

|

||||

* **📺 Horizontal-First Experience**: Say goodbye to "Shorts". The feed only displays horizontal, cinematic content.

|

||||

* **🔍 Specialized Feeds**:

|

||||

* **Tech & AI**: Clean feed for gadget reviews and deep dives.

|

||||

* **Trending**: See what's popular across major categories (Music, Gaming, News).

|

||||

* **Suggested for You**: Personalized recommendations based on your local watch history.

|

||||

* **🧠 Local AI Integration**:

|

||||

* **Auto-Captions**: Automatically enables English subtitles.

|

||||

* **AI Summary**: (Optional) Generate quick text summaries of videos locally.

|

||||

* **⚡ High Performance**: Optimized for speed with smart caching and rate-limit handling.

|

||||

* **📱 PWA Ready**: Install on your phone or tablet with a responsive, app-like interface.

|

||||

- **Privacy First**: No tracking, no ads.

|

||||

- **Clean Interface**: Distraction-free watching experience.

|

||||

- **Efficient Streaming**: Direct video stream extraction using `yt-dlp`.

|

||||

- **AI Summary (Experimental)**: Generate concise summaries of videos (Currently disabled due to upstream rate limits).

|

||||

- **Multi-Language**: Support for English and Vietnamese (UI & Content).

|

||||

- **Auto-Update**: Includes `update_deps.py` to easily keep core fetching tools up-to-date.

|

||||

|

||||

---

|

||||

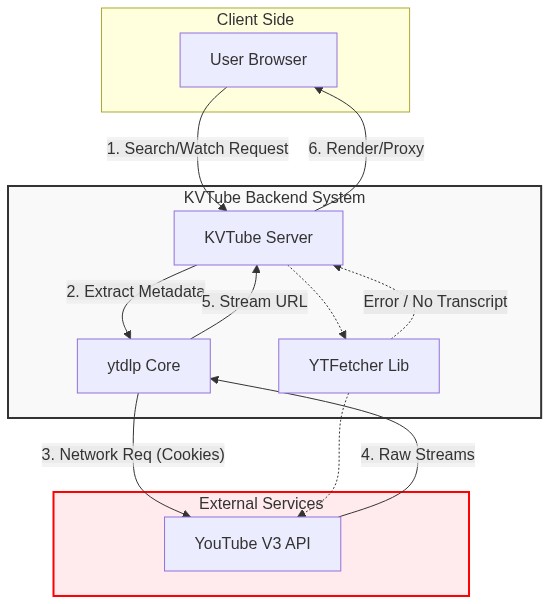

## 🛠️ Architecture Data Flow

|

||||

|

||||

## 🛠️ Deployment

|

||||

|

||||

|

||||

You can run KV-Tube easily using Docker (recommended for NAS/Servers) or directly with Python.

|

||||

## 🔧 Installation & Usage

|

||||

|

||||

### Option A: Docker Compose (Recommended)

|

||||

Ideal for Synology NAS, Unraid, or casual servers.

|

||||

### Prerequisites

|

||||

- Python 3.10+

|

||||

- Git

|

||||

- Valid `cookies.txt` (Optional, for bypassing age-restrictions or rate limits)

|

||||

|

||||

1. Create a folder `kv-tube` and add the `docker-compose.yml` file.

|

||||

2. Run the container:

|

||||

```bash

|

||||

docker-compose up -d

|

||||

```

|

||||

3. Access the app at: **http://localhost:5011**

|

||||

### Local Setup

|

||||

1. Clone the repository:

|

||||

```bash

|

||||

git clone https://git.khoavo.myds.me/vndangkhoa/kv-tube.git

|

||||

cd kv-tube

|

||||

```

|

||||

2. Install dependencies:

|

||||

```bash

|

||||

pip install -r requirements.txt

|

||||

```

|

||||

3. Run the application:

|

||||

```bash

|

||||

python wsgi.py

|

||||

```

|

||||

4. Access at `http://localhost:5002`

|

||||

|

||||

**docker-compose.yml**:

|

||||

```yaml

|

||||

version: '3.8'

|

||||

### Docker Deployment (Linux/AMD64)

|

||||

|

||||

services:

|

||||

kv-tube:

|

||||

image: vndangkhoa/kv-tube:latest

|

||||

container_name: kv-tube

|

||||

restart: unless-stopped

|

||||

ports:

|

||||

- "5011:5000"

|

||||

volumes:

|

||||

- ./data:/app/data

|

||||

environment:

|

||||

- PYTHONUNBUFFERED=1

|

||||

- FLASK_ENV=production

|

||||

Built for stability and ease of use.

|

||||

|

||||

```bash

|

||||

docker pull vndangkhoa/kv-tube:latest

|

||||

docker run -d -p 5002:5002 -v $(pwd)/cookies.txt:/app/cookies.txt vndangkhoa/kv-tube:latest

|

||||

```

|

||||

|

||||

### Option B: Local Development (Python)

|

||||

For developers or running locally on a PC.

|

||||

## 📦 Updates

|

||||

|

||||

1. **Clone & Install**:

|

||||

```bash

|

||||

git clone https://github.com/vndangkhoa/kv-tube.git

|

||||

cd kv-tube

|

||||

python -m venv .venv

|

||||

# Windows

|

||||

.venv\Scripts\activate

|

||||

# Linux/Mac

|

||||

source .venv/bin/activate

|

||||

|

||||

pip install -r requirements.txt

|

||||

```

|

||||

|

||||

2. **Run**:

|

||||

```bash

|

||||

python kv_server.py

|

||||

```

|

||||

|

||||

3. Access the app at: **http://localhost:5002**

|

||||

- **v3.0**: Major release.

|

||||

- Full modularization of backend routes.

|

||||

- Integrated `ytfetcher` for specialized fetching.

|

||||

- Added manual dependency update script (`update_deps.py`).

|

||||

- Enhanced error handling for upstream rate limits.

|

||||

- Docker `linux/amd64` support verified.

|

||||

|

||||

---

|

||||

|

||||

## ⚙️ Configuration

|

||||

|

||||

KV-Tube is designed to be "Zero-Config", but you can customize it via Environment Variables (in `.env` or Docker).

|

||||

|

||||

| Variable | Default | Description |

|

||||

| :--- | :--- | :--- |

|

||||

| `FLASK_ENV` | `production` | Set to `development` for debug mode. |

|

||||

| `KVTUBE_DATA_DIR` | `./data` | Location for the SQLite database. |

|

||||

| `KVTUBE_VIDEO_DIR` | `./videos` | (Optional) Location for downloaded videos. |

|

||||

| `SECRET_KEY` | *(Auto)* | Session security key. Set manually for persistence. |

|

||||

|

||||

---

|

||||

|

||||

## 🔌 API Endpoints

|

||||

KV-Tube exposes a RESTful API for its frontend.

|

||||

|

||||

| Endpoint | Method | Description |

|

||||

| :--- | :--- | :--- |

|

||||

| `/api/search` | `GET` | Search for videos. |

|

||||

| `/api/stream_info` | `GET` | Get raw stream URLs (HLS/MP4). |

|

||||

| `/api/suggested` | `GET` | Get recommendations based on history. |

|

||||

| `/api/download` | `GET` | Get direct download link for a video. |

|

||||

| `/api/history` | `GET` | Retrieve local watch history. |

|

||||

|

||||

---

|

||||

|

||||

## 📜 License

|

||||

Proprietary / Personal Use.

|

||||

Created by **Khoa N.D**

|

||||

*Developed by Khoa Vo*

|

||||

|

|

|

|||

|

|

@ -85,6 +85,13 @@ def create_app(config_name=None):

|

|||

# Register Blueprints

|

||||

register_blueprints(app)

|

||||

|

||||

# Start Background Cache Warmer (x5 Speedup)

|

||||

try:

|

||||

from app.routes.api import start_background_warmer

|

||||

start_background_warmer()

|

||||

except Exception as e:

|

||||

logger.warning(f"Failed to start background warmer: {e}")

|

||||

|

||||

logger.info("KV-Tube app created successfully")

|

||||

return app

|

||||

|

||||

|

|

|

|||

1173

app/routes/api.py

1173

app/routes/api.py

File diff suppressed because it is too large

Load diff

|

|

@ -29,66 +29,115 @@ def stream_local(filename):

|

|||

return send_from_directory(VIDEO_DIR, filename)

|

||||

|

||||

|

||||

@streaming_bp.route("/video_proxy")

|

||||

def add_cors_headers(response):

|

||||

"""Add CORS headers to allow video playback from any origin."""

|

||||

response.headers["Access-Control-Allow-Origin"] = "*"

|

||||

response.headers["Access-Control-Allow-Methods"] = "GET, OPTIONS"

|

||||

response.headers["Access-Control-Allow-Headers"] = "Range, Content-Type"

|

||||

response.headers["Access-Control-Expose-Headers"] = "Content-Length, Content-Range, Accept-Ranges"

|

||||

return response

|

||||

|

||||

|

||||

@streaming_bp.route("/video_proxy", methods=["GET", "OPTIONS"])

|

||||

def video_proxy():

|

||||

"""Proxy video streams with HLS manifest rewriting."""

|

||||

# Handle CORS preflight

|

||||

if request.method == "OPTIONS":

|

||||

response = Response("")

|

||||

return add_cors_headers(response)

|

||||

|

||||

url = request.args.get("url")

|

||||

if not url:

|

||||

return "No URL provided", 400

|

||||

|

||||

# Forward headers to mimic browser and support seeking

|

||||

headers = {

|

||||

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/136.0.0.0 Safari/537.36",

|

||||

# "Referer": "https://www.youtube.com/", # Removed to test if it fixes 403

|

||||

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/120.0.0.0 Safari/537.36",

|

||||

"Referer": "https://www.youtube.com/",

|

||||

"Origin": "https://www.youtube.com",

|

||||

"Accept": "*/*",

|

||||

"Accept-Language": "en-US,en;q=0.9",

|

||||

"Sec-Fetch-Dest": "empty",

|

||||

"Sec-Fetch-Mode": "cors",

|

||||

"Sec-Fetch-Site": "cross-site",

|

||||

}

|

||||

|

||||

# Override with propagated headers (h_*)

|

||||

for key, value in request.args.items():

|

||||

if key.startswith("h_"):

|

||||

header_name = key[2:] # Remove 'h_' prefix

|

||||

headers[header_name] = value

|

||||

|

||||

# Support Range requests (scrubbing)

|

||||

range_header = request.headers.get("Range")

|

||||

if range_header:

|

||||

headers["Range"] = range_header

|

||||

|

||||

try:

|

||||

logger.info(f"Proxying URL: {url}")

|

||||

# logger.info(f"Proxy Request Headers: {headers}")

|

||||

logger.info(f"Proxying URL: {url[:100]}...")

|

||||

req = requests.get(url, headers=headers, stream=True, timeout=30)

|

||||

|

||||

logger.info(f"Upstream Status: {req.status_code}")

|

||||

if req.status_code != 200:

|

||||

logger.error(f"Upstream Error Body: {req.text[:500]}")

|

||||

logger.info(f"Upstream Status: {req.status_code}, Content-Type: {req.headers.get('content-type', 'unknown')}")

|

||||

if req.status_code != 200 and req.status_code != 206:

|

||||

logger.error(f"Upstream Error: {req.status_code}")

|

||||

|

||||

# Handle HLS (M3U8) Rewriting - CRITICAL for 1080p+ and proper sync

|

||||

content_type = req.headers.get("content-type", "").lower()

|

||||

url_path = url.split("?")[0]

|

||||

|

||||

# Improved manifest detection - YouTube may send text/plain or octet-stream

|

||||

is_manifest = (

|

||||

url_path.endswith(".m3u8")

|

||||

or "application/x-mpegurl" in content_type

|

||||

or "application/vnd.apple.mpegurl" in content_type

|

||||

or "mpegurl" in content_type

|

||||

or "m3u8" in url_path.lower()

|

||||

or ("/playlist/" in url.lower() and "index.m3u8" in url.lower())

|

||||

)

|

||||

|

||||

if is_manifest and req.status_code == 200:

|

||||

logger.info(f"Is Manifest: {is_manifest}, Status: {req.status_code}")

|

||||

|

||||

# Handle 200 and 206 (partial content) responses for manifests

|

||||

if is_manifest and req.status_code in [200, 206]:

|

||||

content = req.text

|

||||

base_url = url.rsplit("/", 1)[0]

|

||||

new_lines = []

|

||||

|

||||

logger.info(f"Rewriting manifest with {len(content.splitlines())} lines")

|

||||

|

||||

for line in content.splitlines():

|

||||

if line.strip() and not line.startswith("#"):

|

||||

# If relative, make absolute

|

||||

if not line.startswith("http"):

|

||||

full_url = f"{base_url}/{line}"

|

||||

line_stripped = line.strip()

|

||||

if line_stripped and not line_stripped.startswith("#"):

|

||||

# URL line - needs rewriting

|

||||

if not line_stripped.startswith("http"):

|

||||

# Relative URL - make absolute

|

||||

full_url = f"{base_url}/{line_stripped}"

|

||||

else:

|

||||

full_url = line

|

||||

# Absolute URL

|

||||

full_url = line_stripped

|

||||

|

||||

from urllib.parse import quote

|

||||

quoted_url = quote(full_url, safe="")

|

||||

new_lines.append(f"/video_proxy?url={quoted_url}")

|

||||

new_line = f"/video_proxy?url={quoted_url}"

|

||||

|

||||

# Propagate existing h_* params to segments

|

||||

query_string = request.query_string.decode("utf-8")

|

||||

h_params = [p for p in query_string.split("&") if p.startswith("h_")]

|

||||

if h_params:

|

||||

param_str = "&".join(h_params)

|

||||

new_line += f"&{param_str}"

|

||||

|

||||

new_lines.append(new_line)

|

||||

else:

|

||||

new_lines.append(line)

|

||||

|

||||

return Response(

|

||||

"\n".join(new_lines), content_type="application/vnd.apple.mpegurl"

|

||||

)

|

||||

rewritten_content = "\n".join(new_lines)

|

||||

logger.info(f"Manifest rewritten successfully")

|

||||

|

||||

# Standard Stream Proxy (Binary)

|

||||

response = Response(

|

||||

rewritten_content, content_type="application/vnd.apple.mpegurl"

|

||||

)

|

||||

return add_cors_headers(response)

|

||||

|

||||

# Standard Stream Proxy (Binary) - for video segments and other files

|

||||

excluded_headers = [

|

||||

"content-encoding",

|

||||

"content-length",

|

||||

|

|

@ -101,12 +150,15 @@ def video_proxy():

|

|||

if name.lower() not in excluded_headers

|

||||

]

|

||||

|

||||

return Response(

|

||||

response = Response(

|

||||

stream_with_context(req.iter_content(chunk_size=8192)),

|

||||

status=req.status_code,

|

||||

headers=response_headers,

|

||||

content_type=req.headers.get("content-type"),

|

||||

)

|

||||

return add_cors_headers(response)

|

||||

|

||||

except Exception as e:

|

||||

logger.error(f"Proxy Error: {e}")

|

||||

return str(e), 500

|

||||

|

||||

|

|

|

|||

135

app/services/gemini_summarizer.py

Executable file

135

app/services/gemini_summarizer.py

Executable file

|

|

@ -0,0 +1,135 @@

|

|||

"""

|

||||

AI-powered video summarizer using Google Gemini.

|

||||

"""

|

||||

import os

|

||||

import logging

|

||||

import base64

|

||||

from typing import Optional

|

||||

|

||||

logger = logging.getLogger(__name__)

|

||||

|

||||

# Obfuscated API key - encoded with app-specific salt

|

||||

# This prevents casual copying but is not cryptographically secure

|

||||

_OBFUSCATED_KEY = "QklqYVN5RG9yLWpsdmhtMEVGVkxnV3F4TllFR0MyR21oQUY3Y3Rv"

|

||||

_APP_SALT = "KV-Tube-2026"

|

||||

|

||||

def _decode_api_key() -> str:

|

||||

"""Decode the obfuscated API key. Only works with correct app context."""

|

||||

try:

|

||||

# Decode base64

|

||||

decoded = base64.b64decode(_OBFUSCATED_KEY).decode('utf-8')

|

||||

# Remove prefix added during encoding

|

||||

if decoded.startswith("Bij"):

|

||||

return "AI" + decoded[3:] # Reconstruct original key

|

||||

return decoded

|

||||

except:

|

||||

return ""

|

||||

|

||||

# Get API key: prefer environment variable, fall back to obfuscated default

|

||||

GEMINI_API_KEY = os.environ.get("GEMINI_API_KEY", "") or _decode_api_key()

|

||||

|

||||

def summarize_with_gemini(transcript: str, video_title: str = "") -> Optional[str]:

|

||||

"""

|

||||

Summarize video transcript using Google Gemini AI.

|

||||

|

||||

Args:

|

||||

transcript: The video transcript text

|

||||

video_title: Optional video title for context

|

||||

|

||||

Returns:

|

||||

AI-generated summary or None if failed

|

||||

"""

|

||||

if not GEMINI_API_KEY:

|

||||

logger.warning("GEMINI_API_KEY not set, falling back to TextRank")

|

||||

return None

|

||||

|

||||

try:

|

||||

logger.info(f"Importing google.generativeai... Key len: {len(GEMINI_API_KEY)}")

|

||||

import google.generativeai as genai

|

||||

|

||||

genai.configure(api_key=GEMINI_API_KEY)

|

||||

logger.info("Gemini configured. Creating model...")

|

||||

model = genai.GenerativeModel('gemini-1.5-flash')

|

||||

|

||||

# Limit transcript to avoid token limits

|

||||

max_chars = 8000

|

||||

if len(transcript) > max_chars:

|

||||

transcript = transcript[:max_chars] + "..."

|

||||

|

||||

logger.info(f"Generating summary content... Transcript len: {len(transcript)}")

|

||||

# Create prompt for summarization

|

||||

prompt = f"""You are a helpful AI assistant. Summarize the following video transcript in 2-3 concise sentences.

|

||||

Focus on the main topic and key points. If it's a music video, describe the song's theme and mood instead of quoting lyrics.

|

||||

|

||||

Video Title: {video_title if video_title else 'Unknown'}

|

||||

|

||||

Transcript:

|

||||

{transcript}

|

||||

|

||||

Provide a brief, informative summary (2-3 sentences max):"""

|

||||

|

||||

response = model.generate_content(prompt)

|

||||

logger.info("Gemini response received.")

|

||||

|

||||

if response and response.text:

|

||||

summary = response.text.strip()

|

||||

# Clean up any markdown formatting

|

||||

summary = summary.replace("**", "").replace("##", "").replace("###", "")

|

||||

return summary

|

||||

|

||||

return None

|

||||

|

||||

except Exception as e:

|

||||

logger.error(f"Gemini summarization error: {e}")

|

||||

return None

|

||||

|

||||

|

||||

def extract_key_points_with_gemini(transcript: str, video_title: str = "") -> list:

|

||||

"""

|

||||

Extract key points from video transcript using Gemini AI.

|

||||

|

||||

Returns:

|

||||

List of key points or empty list if failed

|

||||

"""

|

||||

if not GEMINI_API_KEY:

|

||||

return []

|

||||

|

||||

try:

|

||||

import google.generativeai as genai

|

||||

|

||||

genai.configure(api_key=GEMINI_API_KEY)

|

||||

model = genai.GenerativeModel('gemini-1.5-flash')

|

||||

|

||||

# Limit transcript

|

||||

max_chars = 6000

|

||||

if len(transcript) > max_chars:

|

||||

transcript = transcript[:max_chars] + "..."

|

||||

|

||||

prompt = f"""Extract 3-5 key points from this video transcript. For each point, provide a single short sentence.

|

||||

If it's a music video, describe the themes, mood, and notable elements instead of quoting lyrics.

|

||||

|

||||

Video Title: {video_title if video_title else 'Unknown'}

|

||||

|

||||

Transcript:

|

||||

{transcript}

|

||||

|

||||

Key points (one per line, no bullet points or numbers):"""

|

||||

|

||||

response = model.generate_content(prompt)

|

||||

|

||||

if response and response.text:

|

||||

lines = response.text.strip().split('\n')

|

||||

# Clean up and filter

|

||||

points = []

|

||||

for line in lines:

|

||||

line = line.strip().lstrip('•-*123456789.)')

|

||||

line = line.strip()

|

||||

if line and len(line) > 10:

|

||||

points.append(line)

|

||||

return points[:5] # Max 5 points

|

||||

|

||||

return []

|

||||

|

||||

except Exception as e:

|

||||

logger.error(f"Gemini key points error: {e}")

|

||||

return []

|

||||

114

app/services/loader_to.py

Executable file

114

app/services/loader_to.py

Executable file

|

|

@ -0,0 +1,114 @@

|

|||

|

||||

import requests

|

||||

import time

|

||||

import logging

|

||||

import json

|

||||

from typing import Optional, Dict, Any

|

||||

from config import Config

|

||||

|

||||

logger = logging.getLogger(__name__)

|

||||

|

||||

class LoaderToService:

|

||||

"""Service for interacting with loader.to / savenow.to API"""

|

||||

|

||||

BASE_URL = "https://p.savenow.to"

|

||||

DOWNLOAD_ENDPOINT = "/ajax/download.php"

|

||||

PROGRESS_ENDPOINT = "/api/progress"

|

||||

|

||||

@classmethod

|

||||

def get_stream_url(cls, video_url: str, format_id: str = "1080") -> Optional[Dict[str, Any]]:

|

||||

"""

|

||||

Get download URL for a video via loader.to

|

||||

|

||||

Args:

|

||||

video_url: Full YouTube URL

|

||||

format_id: Target format (1080, 720, 4k, etc.)

|

||||

|

||||

Returns:

|

||||

Dict containing 'stream_url' and available metadata, or None

|

||||

"""

|

||||

try:

|

||||

# 1. Initiate Download

|

||||

params = {

|

||||

'format': format_id,

|

||||

'url': video_url,

|

||||

'api_key': Config.LOADER_TO_API_KEY

|

||||

}

|

||||

|

||||

# Using curl-like headers to avoid bot detection

|

||||

headers = {

|

||||

'User-Agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/120.0.0.0 Safari/537.36',

|

||||

'Referer': 'https://loader.to/',

|

||||

'Origin': 'https://loader.to'

|

||||

}

|

||||

|

||||

logger.info(f"Initiating Loader.to fetch for {video_url}")

|

||||

response = requests.get(

|

||||

f"{cls.BASE_URL}{cls.DOWNLOAD_ENDPOINT}",

|

||||

params=params,

|

||||

headers=headers,

|

||||

timeout=10

|

||||

)

|

||||

response.raise_for_status()

|

||||

data = response.json()

|

||||

|

||||

if not data.get('success') and not data.get('id'):

|

||||

logger.error(f"Loader.to initial request failed: {data}")

|

||||

return None

|

||||

|

||||

task_id = data.get('id')

|

||||

info = data.get('info', {})

|

||||

logger.info(f"Loader.to task started: {task_id}")

|

||||

|

||||

# 2. Poll for progress

|

||||

# Timeout after 60 seconds

|

||||

start_time = time.time()

|

||||

while time.time() - start_time < 60:

|

||||

progress_url = data.get('progress_url')

|

||||

# If progress_url is missing, construct it manually (fallback)

|

||||

if not progress_url and task_id:

|

||||

progress_url = f"{cls.BASE_URL}/api/progress?id={task_id}"

|

||||

|

||||

if not progress_url:

|

||||

logger.error("No progress URL found")

|

||||

return None

|

||||

|

||||

p_res = requests.get(progress_url, headers=headers, timeout=10)

|

||||

if p_res.status_code != 200:

|

||||

logger.warning(f"Progress check failed: {p_res.status_code}")

|

||||

time.sleep(2)

|

||||

continue

|

||||

|

||||

p_data = p_res.json()

|

||||

|

||||

# Check for success (success can be boolean true or int 1)

|

||||

is_success = p_data.get('success') in [True, 1, '1']

|

||||

text_status = p_data.get('text', '').lower()

|

||||

|

||||

if is_success and p_data.get('download_url'):

|

||||

logger.info("Loader.to extraction successful")

|

||||

return {

|

||||

'stream_url': p_data['download_url'],

|

||||

'title': info.get('title') or 'Unknown Title',

|

||||

'thumbnail': info.get('image'),

|

||||

# Add basic fields to match yt-dlp dict structure

|

||||

'description': f"Fetched via Loader.to (Format: {format_id})",

|

||||

'uploader': 'Unknown',

|

||||

'duration': None,

|

||||

'view_count': 0

|

||||

}

|

||||

|

||||

# Check for failure

|

||||

if 'error' in text_status or 'failed' in text_status:

|

||||

logger.error(f"Loader.to task failed: {text_status}")

|

||||

return None

|

||||

|

||||

# Wait before next poll

|

||||

time.sleep(2)

|

||||

|

||||

logger.error("Loader.to timed out waiting for video")

|

||||

return None

|

||||

|

||||

except Exception as e:

|

||||

logger.error(f"Loader.to service error: {e}")

|

||||

return None

|

||||

55

app/services/settings.py

Executable file

55

app/services/settings.py

Executable file

|

|

@ -0,0 +1,55 @@

|

|||

|

||||

import json

|

||||

import os

|

||||

import logging

|

||||

from config import Config

|

||||

|

||||

logger = logging.getLogger(__name__)

|

||||

|

||||

class SettingsService:

|

||||

"""Manage application settings using a JSON file"""

|

||||

|

||||

SETTINGS_FILE = os.path.join(Config.DATA_DIR, 'settings.json')

|

||||

|

||||

# Default settings

|

||||

DEFAULTS = {

|

||||

'youtube_engine': 'auto', # auto, local, remote

|

||||

}

|

||||

|

||||

@classmethod

|

||||

def _load_settings(cls) -> dict:

|

||||

"""Load settings from file or return defaults"""

|

||||

try:

|

||||

if os.path.exists(cls.SETTINGS_FILE):

|

||||

with open(cls.SETTINGS_FILE, 'r') as f:

|

||||

data = json.load(f)

|

||||

# Merge with defaults to ensure all keys exist

|

||||

return {**cls.DEFAULTS, **data}

|

||||

except Exception as e:

|

||||

logger.error(f"Error loading settings: {e}")

|

||||

|

||||

return cls.DEFAULTS.copy()

|

||||

|

||||

@classmethod

|

||||

def get(cls, key: str, default=None):

|

||||

"""Get a setting value"""

|

||||

settings = cls._load_settings()

|

||||

return settings.get(key, default if default is not None else cls.DEFAULTS.get(key))

|

||||

|

||||

@classmethod

|

||||

def set(cls, key: str, value):

|

||||

"""Set a setting value and persist"""

|

||||

settings = cls._load_settings()

|

||||

settings[key] = value

|

||||

|

||||

try:

|

||||

with open(cls.SETTINGS_FILE, 'w') as f:

|

||||

json.dump(settings, f, indent=2)

|

||||

except Exception as e:

|

||||

logger.error(f"Error saving settings: {e}")

|

||||

raise

|

||||

|

||||

@classmethod

|

||||

def get_all(cls):

|

||||

"""Get all settings"""

|

||||

return cls._load_settings()

|

||||

|

|

@ -1,116 +1,119 @@

|

|||

"""

|

||||

Summarizer Service Module

|

||||

Extractive text summarization for video transcripts

|

||||

"""

|

||||

|

||||

import re

|

||||

import heapq

|

||||

import math

|

||||

import logging

|

||||

from typing import List

|

||||

|

||||

logger = logging.getLogger(__name__)

|

||||

|

||||

# Stop words for summarization

|

||||

STOP_WORDS = frozenset([

|

||||

'the', 'a', 'an', 'and', 'or', 'but', 'is', 'are', 'was', 'were',

|

||||

'to', 'of', 'in', 'on', 'at', 'for', 'with', 'that', 'this', 'it',

|

||||

'you', 'i', 'we', 'they', 'he', 'she', 'be', 'have', 'has', 'do',

|

||||

'does', 'did', 'will', 'would', 'could', 'should', 'may', 'might',

|

||||

'must', 'can', 'not', 'no', 'so', 'as', 'if', 'then', 'than',

|

||||

'when', 'where', 'what', 'which', 'who', 'how', 'why', 'all',

|

||||

'each', 'every', 'both', 'few', 'more', 'most', 'other', 'some',

|

||||

'such', 'any', 'only', 'own', 'same', 'just', 'now', 'also', 'very'

|

||||

])

|

||||

|

||||

|

||||

def extractive_summary(text: str, num_sentences: int = 5) -> str:

|

||||

class TextRankSummarizer:

|

||||

"""

|

||||

Generate an extractive summary of text

|

||||

|

||||

Args:

|

||||

text: Input text to summarize

|

||||

num_sentences: Number of sentences to extract

|

||||

|

||||

Returns:

|

||||

Summary string with top-ranked sentences

|

||||

Summarizes text using a TextRank-like graph algorithm.

|

||||

This creates more coherent "whole idea" summaries than random extraction.

|

||||

"""

|

||||

if not text or not text.strip():

|

||||

return "Not enough content to summarize."

|

||||

|

||||

# Clean text - remove metadata like [Music] common in auto-captions

|

||||

clean_text = re.sub(r'\[.*?\]', '', text)

|

||||

clean_text = clean_text.replace('\n', ' ')

|

||||

clean_text = re.sub(r'\s+', ' ', clean_text).strip()

|

||||

def __init__(self):

|

||||

self.stop_words = set([

|

||||

"the", "a", "an", "and", "or", "but", "is", "are", "was", "were",

|

||||

"to", "of", "in", "on", "at", "for", "width", "that", "this", "it",

|

||||

"you", "i", "we", "they", "he", "she", "have", "has", "had", "do",

|

||||

"does", "did", "with", "as", "by", "from", "at", "but", "not", "what",

|

||||

"all", "were", "when", "can", "said", "there", "use", "an", "each",

|

||||

"which", "she", "do", "how", "their", "if", "will", "up", "other",

|

||||

"about", "out", "many", "then", "them", "these", "so", "some", "her",

|

||||

"would", "make", "like", "him", "into", "time", "has", "look", "two",

|

||||

"more", "write", "go", "see", "number", "no", "way", "could", "people",

|

||||

"my", "than", "first", "water", "been", "call", "who", "oil", "its",

|

||||

"now", "find", "long", "down", "day", "did", "get", "come", "made",

|

||||

"may", "part"

|

||||

])

|

||||

|

||||

if len(clean_text) < 100:

|

||||

return clean_text

|

||||

def summarize(self, text: str, num_sentences: int = 5) -> str:

|

||||

"""

|

||||

Generate a summary of the text.

|

||||

|

||||

# Split into sentences

|

||||

sentences = _split_sentences(clean_text)

|

||||

Args:

|

||||

text: Input text

|

||||

num_sentences: Number of sentences in the summary

|

||||

|

||||

if len(sentences) <= num_sentences:

|

||||

return clean_text

|

||||

Returns:

|

||||

Summarized text string

|

||||

"""

|

||||

if not text:

|

||||

return ""

|

||||

|

||||

# Calculate word frequencies

|

||||

word_frequencies = _calculate_word_frequencies(clean_text)

|

||||

# 1. Split into sentences

|

||||

# Use regex to look for periods/questions/exclamations followed by space or end of string

|

||||

sentences = re.split(r'(?<!\w\.\w.)(?<![A-Z][a-z]\.)(?<=\.|\?|\!)\s', text)

|

||||

sentences = [s.strip() for s in sentences if len(s.strip()) > 20] # Filter very short fragments

|

||||

|

||||

if not word_frequencies:

|

||||

return "Not enough content to summarize."

|

||||

if not sentences:

|

||||

return text[:500] + "..." if len(text) > 500 else text

|

||||

|

||||

# Score sentences

|

||||

sentence_scores = _score_sentences(sentences, word_frequencies)

|

||||

if len(sentences) <= num_sentences:

|

||||

return " ".join(sentences)

|

||||

|

||||

# Extract top N sentences

|

||||

top_sentences = heapq.nlargest(num_sentences, sentence_scores, key=sentence_scores.get)

|

||||

# 2. Build Similarity Graph

|

||||

# We calculate cosine similarity between all pairs of sentences

|

||||

# graph[i][j] = similarity score

|

||||

n = len(sentences)

|

||||

scores = [0.0] * n

|

||||

|

||||

# Return in original order

|

||||

ordered = [s for s in sentences if s in top_sentences]

|

||||

# Pre-process sentences for efficiency

|

||||

# Convert to sets of words

|

||||

sent_words = []

|

||||

for s in sentences:

|

||||

words = re.findall(r'\w+', s.lower())

|

||||

words = [w for w in words if w not in self.stop_words]

|

||||

sent_words.append(words)

|

||||

|

||||

return ' '.join(ordered)

|

||||

# Adjacency matrix (conceptual) - we'll just sum weights for "centrality"

|

||||

# TextRank logic: a sentence is important if it is similar to other important sentences.

|

||||

# Simplified: weighted degree centrality often works well enough for simple tasks without full iterative convergence

|

||||

|

||||

for i in range(n):

|

||||

for j in range(i + 1, n):

|

||||

sim = self._cosine_similarity(sent_words[i], sent_words[j])

|

||||

if sim > 0:

|

||||

scores[i] += sim

|

||||

scores[j] += sim

|

||||

|

||||

def _split_sentences(text: str) -> List[str]:

|

||||

"""Split text into sentences"""

|

||||

# Regex for sentence splitting - handles abbreviations

|

||||

pattern = r'(?<!\w\.\w.)(?<![A-Z][a-z]\.)(?<=\.|\?|\!)\s'

|

||||

sentences = re.split(pattern, text)

|

||||

# 3. Rank and Select

|

||||

# Sort by score descending

|

||||

ranked_sentences = sorted(((scores[i], i) for i in range(n)), reverse=True)

|

||||

|

||||

# Filter out very short sentences

|

||||

return [s.strip() for s in sentences if len(s.strip()) > 20]

|

||||

# Pick top N

|

||||

top_indices = [idx for score, idx in ranked_sentences[:num_sentences]]

|

||||

|

||||

# 4. Reorder by appearance in original text for coherence

|

||||

top_indices.sort()

|

||||

|

||||

def _calculate_word_frequencies(text: str) -> dict:

|

||||

"""Calculate normalized word frequencies"""

|

||||

word_frequencies = {}

|

||||

summary = " ".join([sentences[i] for i in top_indices])

|

||||

return summary

|

||||

|

||||

words = re.findall(r'\w+', text.lower())

|

||||

def _cosine_similarity(self, words1: List[str], words2: List[str]) -> float:

|

||||

"""Calculate cosine similarity between two word lists."""

|

||||

if not words1 or not words2:

|

||||

return 0.0

|

||||

|

||||

for word in words:

|

||||

if word not in STOP_WORDS and len(word) > 2:

|

||||

word_frequencies[word] = word_frequencies.get(word, 0) + 1

|

||||

# Unique words in both

|

||||

all_words = set(words1) | set(words2)

|

||||

|

||||

if not word_frequencies:

|

||||

return {}

|

||||

# Frequency vectors

|

||||

vec1 = {w: 0 for w in all_words}

|

||||

vec2 = {w: 0 for w in all_words}

|

||||

|

||||

# Normalize by max frequency

|

||||

max_freq = max(word_frequencies.values())

|

||||

for word in word_frequencies:

|

||||

word_frequencies[word] = word_frequencies[word] / max_freq

|

||||

for w in words1: vec1[w] += 1

|

||||

for w in words2: vec2[w] += 1

|

||||

|

||||

return word_frequencies

|

||||

# Dot product

|

||||

dot_product = sum(vec1[w] * vec2[w] for w in all_words)

|

||||

|

||||

# Magnitudes

|

||||

mag1 = math.sqrt(sum(v*v for v in vec1.values()))

|

||||

mag2 = math.sqrt(sum(v*v for v in vec2.values()))

|

||||

|

||||

def _score_sentences(sentences: List[str], word_frequencies: dict) -> dict:

|

||||

"""Score sentences based on word frequencies"""

|

||||

sentence_scores = {}

|

||||

if mag1 == 0 or mag2 == 0:

|

||||

return 0.0

|

||||

|

||||

for sentence in sentences:

|

||||

words = re.findall(r'\w+', sentence.lower())

|

||||

score = sum(word_frequencies.get(word, 0) for word in words)

|

||||

|

||||

# Normalize by sentence length to avoid bias toward long sentences

|

||||

if len(words) > 0:

|

||||

score = score / (len(words) ** 0.5) # Square root normalization

|

||||

|

||||

sentence_scores[sentence] = score

|

||||

|

||||

return sentence_scores

|

||||

return dot_product / (mag1 * mag2)

|

||||

|

|

|

|||

211

app/services/transcript_service.py

Executable file

211

app/services/transcript_service.py

Executable file

|

|

@ -0,0 +1,211 @@

|

|||

"""

|

||||

Transcript Service Module

|

||||

Fetches video transcripts with fallback strategy: yt-dlp -> ytfetcher

|

||||

"""

|

||||

import os

|

||||

import re

|

||||

import glob

|

||||

import json

|

||||

import random

|

||||

import logging

|

||||

from typing import Optional

|

||||

|

||||

logger = logging.getLogger(__name__)

|

||||

|

||||

|

||||

class TranscriptService:

|

||||

"""Service for fetching YouTube video transcripts with fallback support."""

|

||||

|

||||

@classmethod

|

||||

def get_transcript(cls, video_id: str) -> Optional[str]:

|

||||

"""

|

||||

Get transcript text for a video.

|

||||

|

||||

Strategy:

|

||||

1. Try yt-dlp (current method, handles auto-generated captions)

|

||||

2. Fallback to ytfetcher library if yt-dlp fails

|

||||

|

||||

Args:

|

||||

video_id: YouTube video ID

|

||||

|

||||

Returns:

|

||||

Transcript text or None if unavailable

|

||||

"""

|

||||

video_id = video_id.strip()

|

||||

|

||||

# Try yt-dlp first (primary method)

|

||||

text = cls._fetch_with_ytdlp(video_id)

|

||||

if text:

|

||||

logger.info(f"Transcript fetched via yt-dlp for {video_id}")

|

||||

return text

|

||||

|

||||

# Fallback to ytfetcher

|

||||

logger.info(f"yt-dlp failed, trying ytfetcher for {video_id}")

|

||||

text = cls._fetch_with_ytfetcher(video_id)

|

||||

if text:

|

||||

logger.info(f"Transcript fetched via ytfetcher for {video_id}")

|

||||

return text

|

||||

|

||||

logger.warning(f"All transcript methods failed for {video_id}")

|

||||

return None

|

||||

|

||||

@classmethod

|

||||

def _fetch_with_ytdlp(cls, video_id: str) -> Optional[str]:

|

||||

"""Fetch transcript using yt-dlp (downloading subtitles to file)."""

|

||||

import yt_dlp

|

||||

|

||||

try:

|

||||

logger.info(f"Fetching transcript for {video_id} using yt-dlp")

|

||||

|

||||

# Use a temporary filename pattern

|

||||

temp_prefix = f"transcript_{video_id}_{random.randint(1000, 9999)}"

|

||||

|

||||

ydl_opts = {

|

||||

'skip_download': True,

|

||||

'quiet': True,

|

||||

'no_warnings': True,

|

||||

'cookiefile': os.environ.get('COOKIES_FILE', 'cookies.txt') if os.path.exists(os.environ.get('COOKIES_FILE', 'cookies.txt')) else None,

|

||||

'writesubtitles': True,

|

||||

'writeautomaticsub': True,

|

||||

'subtitleslangs': ['en', 'vi', 'en-US'],

|

||||

'outtmpl': f"/tmp/{temp_prefix}",

|

||||

'subtitlesformat': 'json3/vtt/best',

|

||||

}

|

||||

|

||||

with yt_dlp.YoutubeDL(ydl_opts) as ydl:

|

||||

ydl.download([f"https://www.youtube.com/watch?v={video_id}"])

|

||||

|

||||

# Find the downloaded file

|

||||

downloaded_files = glob.glob(f"/tmp/{temp_prefix}*")

|

||||

|

||||

if not downloaded_files:

|

||||

logger.warning("yt-dlp finished but no subtitle file found.")

|

||||

return None

|

||||

|

||||

# Pick the best file (prefer json3, then vtt)

|

||||

selected_file = None

|

||||

for ext in ['.json3', '.vtt', '.ttml', '.srv3']:

|

||||

for f in downloaded_files:

|

||||

if f.endswith(ext):

|

||||

selected_file = f

|

||||

break

|

||||

if selected_file:

|

||||

break

|

||||

|

||||

if not selected_file:

|

||||

selected_file = downloaded_files[0]

|

||||

|

||||

# Read content

|

||||

with open(selected_file, 'r', encoding='utf-8') as f:

|

||||

content = f.read()

|

||||

|

||||

# Cleanup

|

||||

for f in downloaded_files:

|

||||

try:

|

||||

os.remove(f)

|

||||

except:

|

||||

pass

|

||||

|

||||

# Parse based on format

|

||||

if selected_file.endswith('.json3') or content.strip().startswith('{'):

|

||||

return cls._parse_json3(content)

|

||||

else:

|

||||

return cls._parse_vtt(content)

|

||||

|

||||

except Exception as e:

|

||||

logger.error(f"yt-dlp transcript fetch failed: {e}")

|

||||

return None

|

||||

|

||||

@classmethod

|

||||

def _fetch_with_ytfetcher(cls, video_id: str) -> Optional[str]:

|

||||

"""Fetch transcript using ytfetcher library as fallback."""

|

||||

try:

|

||||

from ytfetcher import YTFetcher

|

||||

|

||||

logger.info(f"Using ytfetcher for {video_id}")

|

||||

|

||||

# Create fetcher for single video

|

||||

fetcher = YTFetcher.from_video_ids(video_ids=[video_id])

|

||||

|

||||

# Fetch transcripts

|

||||

data = fetcher.fetch_transcripts()

|

||||

|

||||

if not data:

|

||||

logger.warning(f"ytfetcher returned no data for {video_id}")

|

||||

return None

|

||||

|

||||

# Extract text from transcript objects

|

||||

text_parts = []

|

||||

for item in data:

|

||||

transcripts = getattr(item, 'transcripts', []) or []

|

||||

for t in transcripts:

|

||||

txt = getattr(t, 'text', '') or ''

|

||||

txt = txt.strip()

|

||||

if txt and txt != '\n':

|

||||

text_parts.append(txt)

|

||||

|

||||

if not text_parts:

|

||||

logger.warning(f"ytfetcher returned empty transcripts for {video_id}")

|

||||

return None

|

||||

|

||||

return " ".join(text_parts)

|

||||

|

||||

except ImportError:

|

||||

logger.warning("ytfetcher not installed. Run: pip install ytfetcher")

|

||||

return None

|

||||

except Exception as e:

|

||||

logger.error(f"ytfetcher transcript fetch failed: {e}")

|

||||

return None

|

||||

|

||||

@staticmethod

|

||||

def _parse_json3(content: str) -> Optional[str]:

|

||||

"""Parse JSON3 subtitle format."""

|

||||

try:

|

||||

json_data = json.loads(content)

|

||||

events = json_data.get('events', [])

|

||||

text_parts = []

|

||||

for event in events:

|

||||

segs = event.get('segs', [])

|

||||

for seg in segs:

|

||||

txt = seg.get('utf8', '').strip()

|

||||

if txt and txt != '\n':

|

||||

text_parts.append(txt)

|

||||

return " ".join(text_parts)

|

||||

except Exception as e:

|

||||

logger.warning(f"JSON3 parse failed: {e}")

|

||||

return None

|

||||

|

||||

@staticmethod

|

||||

def _parse_vtt(content: str) -> Optional[str]:

|

||||

"""Parse VTT/XML subtitle content."""

|

||||

try:

|

||||

lines = content.splitlines()

|

||||

text_lines = []

|

||||

seen = set()

|

||||

|

||||

for line in lines:

|

||||

line = line.strip()

|

||||

if not line:

|

||||

continue

|

||||

if "-->" in line:

|

||||

continue

|

||||

if line.isdigit():

|

||||

continue

|

||||

if line.startswith("WEBVTT"):

|

||||

continue

|

||||

if line.startswith("Kind:"):

|

||||

continue

|

||||

if line.startswith("Language:"):

|

||||

continue

|

||||

|

||||

# Remove tags like <c> or <00:00:00>

|

||||

clean = re.sub(r'<[^>]+>', '', line)

|

||||

if clean and clean not in seen:

|

||||

seen.add(clean)

|

||||

text_lines.append(clean)

|

||||

|

||||

return " ".join(text_lines)

|

||||

|

||||

except Exception as e:

|

||||

logger.error(f"VTT transcript parse error: {e}")

|

||||

return None

|

||||

|

|

@ -6,6 +6,8 @@ import yt_dlp

|

|||

import logging

|

||||

from typing import Optional, List, Dict, Any

|

||||

from config import Config

|

||||

from app.services.loader_to import LoaderToService

|

||||

from app.services.settings import SettingsService

|

||||

|

||||

logger = logging.getLogger(__name__)

|

||||

|

||||

|

|

@ -20,6 +22,7 @@ class YouTubeService:

|

|||

'extract_flat': 'in_playlist',

|

||||

'force_ipv4': True,

|

||||

'socket_timeout': Config.YTDLP_TIMEOUT,

|

||||

'user_agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/120.0.0.0 Safari/537.36',

|

||||

}

|

||||

|

||||

@staticmethod

|

||||

|

|

@ -113,6 +116,34 @@ class YouTubeService:

|

|||

Returns:

|

||||

Video info dict with stream_url, or None on error

|

||||

"""

|

||||

engine = SettingsService.get('youtube_engine', 'auto')

|

||||

|

||||

# 1. Force Remote

|

||||

if engine == 'remote':

|

||||

return cls._get_info_remote(video_id)

|

||||

|

||||

# 2. Local (or Auto first attempt)

|

||||

info = cls._get_info_local(video_id)

|

||||

|

||||

if info:

|

||||

return info

|

||||

|

||||

# 3. Failover if Auto

|

||||

if engine == 'auto' and not info:

|

||||

logger.warning(f"yt-dlp failed for {video_id}, falling back to remote loader")

|

||||

return cls._get_info_remote(video_id)

|

||||

|

||||

return None

|

||||

|

||||

@classmethod

|

||||

def _get_info_remote(cls, video_id: str) -> Optional[Dict[str, Any]]:

|

||||

"""Fetch info using LoaderToService"""

|

||||

url = f"https://www.youtube.com/watch?v={video_id}"

|

||||

return LoaderToService.get_stream_url(url)

|

||||

|

||||

@classmethod

|

||||

def _get_info_local(cls, video_id: str) -> Optional[Dict[str, Any]]:

|

||||

"""Fetch info using yt-dlp (original logic)"""

|

||||

try:

|

||||

url = f"https://www.youtube.com/watch?v={video_id}"

|

||||

|

||||

|

|

@ -148,10 +179,12 @@ class YouTubeService:

|

|||

'view_count': info.get('view_count', 0),

|

||||

'subtitle_url': subtitle_url,

|

||||

'duration': info.get('duration'),

|

||||

'thumbnail': info.get('thumbnail') or f"https://i.ytimg.com/vi/{video_id}/hqdefault.jpg",

|

||||

'http_headers': info.get('http_headers', {})

|

||||

}

|

||||

|

||||

except Exception as e:

|

||||

logger.error(f"Error getting video info for {video_id}: {e}")

|

||||

logger.error(f"Error getting local video info for {video_id}: {e}")

|

||||

return None

|

||||

|

||||

@staticmethod

|

||||

|

|

|

|||

BIN

bin/ffmpeg

Executable file

BIN

bin/ffmpeg

Executable file

Binary file not shown.

|

|

@ -29,9 +29,16 @@ class Config:

|

|||

CACHE_CHANNEL_TTL = 1800 # 30 minutes

|

||||

|

||||

# yt-dlp settings

|

||||

YTDLP_FORMAT = 'best[ext=mp4]/best'

|

||||

# yt-dlp settings - MUST use progressive formats with combined audio+video

|

||||

# Format 22 = 720p mp4, 18 = 360p mp4 (both have audio+video combined)

|

||||

# HLS m3u8 streams have CORS issues with segment proxying, so we avoid them

|

||||

YTDLP_FORMAT = '22/18/best[protocol^=https][ext=mp4]/best[ext=mp4]/best'

|

||||

YTDLP_TIMEOUT = 30

|

||||

|

||||

# YouTube Engine Settings

|

||||

YOUTUBE_ENGINE = os.environ.get('YOUTUBE_ENGINE', 'auto') # auto, local, remote

|

||||

LOADER_TO_API_KEY = os.environ.get('LOADER_TO_API_KEY', '') # Optional

|

||||

|

||||

@staticmethod

|

||||

def init_app(app):

|

||||

"""Initialize app with config"""

|

||||

|

|

|

|||

19

cookies.txt

Executable file

19

cookies.txt

Executable file

|

|

@ -0,0 +1,19 @@

|

|||

# Netscape HTTP Cookie File

|

||||

# This file is generated by yt-dlp. Do not edit.

|

||||

|

||||

.youtube.com TRUE / TRUE 1831894348 __Secure-3PSID g.a0005wie1jXMw44_RGjwtqg21AbatcdNseI3S_qNtsLYC1jS4YSUdfLDtJlA8h-NEmMGebiczgACgYKAX0SARESFQHGX2MiUz2RnkvviMoB7UNylf3SoBoVAUF8yKo0JwXF5B9H9roWaSTRT-QN0076

|

||||

.youtube.com TRUE / TRUE 1800281710 __Secure-1PSIDTS sidts-CjQB7I_69DRJdiQQGddE6tt-GHilv2IjDZd8S6FlWCjx2iReOoNtQMUkb55vaBdl8vBK7J_DEAA

|

||||

.youtube.com TRUE / TRUE 1802692356 SAPISID DP6iRyLCM_cFV1Gw/AN2nemkVrvJ2p8MWb

|

||||

.youtube.com TRUE / TRUE 1800359997 __Secure-1PSIDCC AKEyXzWh3snkS2XAx8pLOzZCgKTPwXKRai_Pn4KjpsSSc2h7tRpVKMDddMKBYkuIQFhpVlALI84

|

||||

.youtube.com TRUE / TRUE 1802692356 SSID A4isk9AE9xActvzYy

|

||||

.youtube.com TRUE / TRUE 1831894348 __Secure-1PAPISID DP6iRyLCM_cFV1Gw/AN2nemkVrvJ2p8MWb

|

||||

.youtube.com TRUE / TRUE 1831894348 __Secure-1PSID g.a0005wie1jXMw44_RGjwtqg21AbatcdNseI3S_qNtsLYC1jS4YSUycKC58NH045FOFX6QW8fDwACgYKAacSARESFQHGX2MiA5xeTuJuh8QmBm-DS3l1ghoVAUF8yKr4klCBhb-EJgFQ9T0TGWKk0076

|

||||

.youtube.com TRUE / TRUE 1831894348 __Secure-3PAPISID DP6iRyLCM_cFV1Gw/AN2nemkVrvJ2p8MWb

|

||||

.youtube.com TRUE / TRUE 1800359997 __Secure-3PSIDCC AKEyXzW3W5Q-e4TIryFWpWS6zVuuVPOvwPIU2tzl1JRdYsGu-7f34g_amk2Xd2ttGtSJ6tOSdA

|

||||

.youtube.com TRUE / TRUE 1800281710 __Secure-3PSIDTS sidts-CjQB7I_69DRJdiQQGddE6tt-GHilv2IjDZd8S6FlWCjx2iReOoNtQMUkb55vaBdl8vBK7J_DEAA

|

||||

.youtube.com TRUE / TRUE 1792154873 LOGIN_INFO AFmmF2swRQIgVjJk8Mho4_JuKr6SZzrhBdlL1LdxWxcwDMu4cjaRRgcCIQCTtJpmYKJH54Tiei3at3f4YT3US7gSL0lW_TZ04guKjQ:QUQ3MjNmeWlwRDJSNDl2NE9uX2JWWG5tWllHN0RsNUVZVUhsLVp4N2dWbldaeC14SnNybWVERnNoaXFpanFJczhKTjJSRGN6MEs3c1VkLTE1TGJVeFBPT05BY29NMFh0Q1VPdFU3dUdvSUpET3lQbU1ZMUlHUGltajlXNDllNUQxZHdzZko1WXF1UUJWclNxQVJ0TXVEYnF2bXJRY2V6Vl9n

|

||||

.youtube.com TRUE / FALSE 0 PREF tz=UTC&f7=150&hl=en

|

||||

.youtube.com TRUE / TRUE 0 YSC y-oH2BqaUSQ

|

||||

.youtube.com TRUE / TRUE 1784333733 __Secure-ROLLOUT_TOKEN CPm51pHVjquOTRDw0bnsppWSAxjzxYe3qZaSAw%3D%3D

|

||||

.youtube.com TRUE / TRUE 1784375997 VISITOR_INFO1_LIVE ShB1Bvj-rRU

|

||||

.youtube.com TRUE / TRUE 1784375997 VISITOR_PRIVACY_METADATA CgJWThIEGgAgWA%3D%3D

|

||||

69

dev.sh

Executable file

69

dev.sh

Executable file

|

|

@ -0,0 +1,69 @@

|

|||

#!/bin/bash

|

||||

set -e

|

||||

|

||||

echo "--- KV-Tube Local Dev Startup ---"

|

||||

|

||||

# 1. Check for FFmpeg (Auto-Install Local Static Binary if missing)

|

||||

if ! command -v ffmpeg &> /dev/null; then

|

||||

echo "[Check] FFmpeg not found globally."

|

||||

|

||||

# Check local bin

|

||||

LOCAL_BIN="$(pwd)/bin"

|

||||

if [ ! -f "$LOCAL_BIN/ffmpeg" ]; then

|

||||

echo "[Setup] Downloading static FFmpeg for macOS ARM64..."

|

||||

mkdir -p "$LOCAL_BIN"

|

||||

|

||||

# Download from Martin Riedl's static builds (macOS ARM64)

|

||||

curl -L -o ffmpeg.zip "https://ffmpeg.martin-riedl.de/redirect/latest/macos/arm64/release/ffmpeg.zip"

|

||||

|

||||

echo "[Setup] Extracting FFmpeg..."

|

||||

unzip -o -q ffmpeg.zip -d "$LOCAL_BIN"

|

||||

rm ffmpeg.zip

|

||||

|

||||

# Some zips extract to a subfolder, ensure binary is in bin root

|

||||

# (This specific source usually dumps 'ffmpeg' directly, but just in case)

|

||||

if [ ! -f "$LOCAL_BIN/ffmpeg" ]; then

|

||||

find "$LOCAL_BIN" -name "ffmpeg" -type f -exec mv {} "$LOCAL_BIN" \;

|

||||

fi

|

||||

|

||||

chmod +x "$LOCAL_BIN/ffmpeg"

|

||||

fi

|

||||

|

||||

# Add local bin to PATH

|

||||

export PATH="$LOCAL_BIN:$PATH"

|

||||

echo "[Setup] Using local FFmpeg from $LOCAL_BIN"

|

||||

fi

|

||||

|

||||

if ! command -v ffmpeg &> /dev/null; then

|

||||

echo "Error: FFmpeg installation failed. Please install manually."

|

||||

exit 1

|

||||

fi

|

||||

echo "[Check] FFmpeg found: $(ffmpeg -version | head -n 1)"

|

||||

|

||||

# 2. Virtual Environment (Optional but recommended)

|

||||

if [ ! -d "venv" ]; then

|

||||

echo "[Setup] Creating python virtual environment..."

|

||||

python3 -m venv venv

|

||||

fi

|

||||

source venv/bin/activate

|

||||

|

||||

# 3. Install Dependencies & Force Nightly yt-dlp

|

||||

echo "[Update] Installing dependencies..."

|

||||

pip install -r requirements.txt

|

||||

|

||||

echo "[Update] Forcing yt-dlp Nightly update..."

|

||||

# This matches the aggressive update strategy of media-roller

|

||||

pip install -U --pre "yt-dlp[default]"

|

||||

|

||||

# 4. Environment Variables

|

||||

export FLASK_APP=wsgi.py

|

||||

export FLASK_ENV=development

|

||||

export PYTHONUNBUFFERED=1

|

||||

|

||||

# 5. Start Application

|

||||

echo "[Startup] Starting KV-Tube on http://localhost:5011"

|

||||

echo "Press Ctrl+C to stop."

|

||||

|

||||

# Run with Gunicorn (closer to prod) or Flask (better for debugging)

|

||||

# Using Gunicorn to match Docker behavior, but with reload for dev

|

||||

exec gunicorn --bind 0.0.0.0:5011 --workers 2 --threads 2 --reload wsgi:app

|

||||

|

|

@ -5,7 +5,7 @@ version: '3.8'

|

|||

|

||||

services:

|

||||

kv-tube:

|

||||

# build: .

|

||||

build: .

|

||||

image: vndangkhoa/kv-tube:latest

|

||||

container_name: kv-tube

|

||||

restart: unless-stopped

|

||||

|

|

|

|||

21

entrypoint.sh

Executable file

21

entrypoint.sh

Executable file

|

|

@ -0,0 +1,21 @@

|

|||

#!/bin/sh

|

||||

set -e

|

||||

|

||||

echo "--- KV-Tube Startup ---"

|

||||

|

||||

# 1. Update Core Engines

|

||||

echo "[Update] Checking for engine updates..."

|

||||

|

||||

# Update yt-dlp

|

||||

echo "[Update] Updating yt-dlp..."

|

||||

pip install -U yt-dlp || echo "Warning: yt-dlp update failed"

|

||||

|

||||

|

||||

|

||||

# 2. Check Loader.to Connectivity (Optional verification)

|

||||

# We won't block startup on this, just log it.

|

||||

echo "[Update] Engines checked."

|

||||

|

||||

# 3. Start Application

|

||||

echo "[Startup] Launching Gunicorn..."

|

||||

exec gunicorn --bind 0.0.0.0:5000 --workers 4 --threads 2 --timeout 120 wsgi:app

|

||||

1144

hydration_debug.txt

Executable file

1144

hydration_debug.txt

Executable file

File diff suppressed because it is too large

Load diff

|

|

@ -4,4 +4,6 @@ yt-dlp>=2024.1.0

|

|||

werkzeug

|

||||

gunicorn

|

||||

python-dotenv

|

||||

googletrans==4.0.0-rc1

|

||||

# ytfetcher - optional, requires Python 3.11-3.13

|

||||

|

||||

|

|

|

|||

|

|

@ -266,6 +266,55 @@

|

|||

background: var(--yt-bg-secondary);

|

||||

}

|

||||

|

||||

/* --- Homepage Sections --- */

|

||||

.yt-homepage-section {

|

||||

margin-bottom: 32px;

|

||||

}

|

||||

|

||||

.yt-section-header {

|

||||

display: flex;

|

||||

justify-content: space-between;

|

||||

align-items: center;

|

||||

margin-bottom: 16px;

|

||||

padding: 0 4px;

|

||||

}

|

||||

|

||||

.yt-section-header h2 {

|

||||

font-size: 20px;

|

||||

font-weight: 600;

|

||||

color: var(--yt-text-primary);

|

||||

margin: 0;

|

||||

}

|

||||

|

||||

.yt-see-all {

|

||||

color: var(--yt-text-secondary);

|

||||

font-size: 14px;

|

||||

background: none;

|

||||

border: none;

|

||||

cursor: pointer;

|

||||

padding: 8px 12px;

|

||||

border-radius: var(--yt-radius-sm);

|

||||

transition: background 0.2s;

|

||||

}

|

||||

|

||||

.yt-see-all:hover {

|

||||

background: var(--yt-bg-hover);

|

||||

}

|

||||

|

||||

@media (max-width: 768px) {

|

||||

.yt-homepage-section {

|

||||

margin-bottom: 24px;

|

||||

}

|

||||

|

||||

.yt-section-header {

|

||||

padding: 0 8px;

|

||||

}

|

||||

|

||||

.yt-section-header h2 {

|

||||

font-size: 18px;

|

||||

}

|

||||

}

|

||||

|

||||

/* --- Categories / Pills --- */

|

||||

.yt-categories {

|

||||

display: flex;

|

||||

|

|

|

|||

|

|

@ -21,39 +21,7 @@ body {

|

|||

overflow: hidden;

|

||||

}

|

||||

|

||||

/* ========== Mini Player Mode ========== */

|

||||

.yt-mini-mode {

|

||||

position: fixed;

|

||||

bottom: 20px;

|

||||

right: 20px;

|

||||

width: 400px !important;

|

||||

height: auto !important;

|

||||

aspect-ratio: 16/9;

|

||||

z-index: 10000;

|

||||

box-shadow: 0 8px 30px rgba(0, 0, 0, 0.5);

|

||||

border-radius: 12px;

|

||||

cursor: grab;

|

||||

transition: width 0.3s, height 0.3s;

|

||||

}

|

||||

|

||||

.yt-mini-mode:active {

|

||||

cursor: grabbing;

|

||||

}

|

||||

|

||||

.yt-player-placeholder {

|

||||

display: none;

|

||||

width: 100%;

|

||||

aspect-ratio: 16/9;

|

||||

background: rgba(0, 0, 0, 0.1);

|

||||

}

|

||||

|

||||

@media (max-width: 768px) {

|

||||

.yt-mini-mode {

|

||||

width: 250px !important;

|

||||

bottom: 80px;

|

||||

right: 10px;

|

||||

}

|

||||

}

|

||||

/* Mini player removed per user request */

|

||||

|

||||

/* ========== Skeleton Loading ========== */

|

||||

@keyframes shimmer {

|

||||

|

|

|

|||

277

static/css/modules/webllm.css

Normal file

277

static/css/modules/webllm.css

Normal file

|

|

@ -0,0 +1,277 @@

|

|||

/**

|

||||

* WebLLM Styles - Loading UI and Progress Bar

|

||||

*/

|

||||

|

||||

/* Model loading overlay */

|

||||

.webllm-loading-overlay {

|

||||

position: fixed;

|

||||

bottom: 100px;

|

||||

right: 20px;

|

||||

background: linear-gradient(135deg,

|

||||

rgba(15, 15, 20, 0.95) 0%,

|

||||

rgba(25, 25, 35, 0.95) 100%);

|

||||

backdrop-filter: blur(20px);

|

||||

-webkit-backdrop-filter: blur(20px);

|

||||

border: 1px solid rgba(255, 255, 255, 0.1);

|

||||

border-radius: 16px;

|

||||

padding: 20px 24px;

|

||||

min-width: 320px;

|

||||

z-index: 9999;

|

||||

box-shadow:

|

||||

0 8px 32px rgba(0, 0, 0, 0.4),

|

||||

0 0 0 1px rgba(255, 255, 255, 0.05) inset;

|

||||